ELLIS Winter School on Foundation Models

In late March, I attended the Foundation Models Winter School, hosted by the European Laboratory for Learning and Intelligent Systems (ELLIS) in Amsterdam. The first day started with Saining Xie (NYU Courant, AMI Labs) explaining why visual representation still matters, Hinrich Schütze (ELLIS Munich) shedding light on internal representations, and Pascal Mettes (ELLIS Amsterdam) introducing hyperbolic foundation models—which almost felt like a political campaign against Euclidean space.

Over the following days, Yossi Gandelsman (Reve, former UC Berkeley) shared his research on model hijacking and steering, Konstantinos Derpanis (York University) revisited interpretability through the lens of universal concepts, Robert Geirhos (Google DeepMind) offered insights into how video models can help spatial understanding, Frank Hutter (ELLIS Tuebingen) highlighted the growing role of foundation models in tabular data, and Ekaterina Shutova (ELLIS Amsterdam) spoke about the challenges of developing multilingual LLMs. On the final day, Nuria Oliver (ELLIS Alicante) began by reflecting on how human biases manifest in foundation models—illustrated by the example of lookism—before presenting work on how effectively LLMs can jailbreak one another. Elisa Ricci (ELLIS Trento) closed the winter school by emphasizing the need to move beyond accuracy and toward trust.

During the panel discussion, ‘From Research to Real-World Impact’, academics seemed to shift their focus when speaking as startup founders, emphasising the constraints of European regulations rather than the advantages of designing systems that reflect our values. Hopefully, future founders will take a different approach. On a more positive note, a poster session featuring PhD students’ research offered a glimpse into exciting ongoing work and left me feeling optimistic about what’s ahead.

Finally, regarding safety: while many speakers touched on the topic, the focus often leaned more toward finding ways to break systems than toward building architectures that ensure safety. Safety assurance for foundation models is clearly difficult, but that’s exactly why it deserves more attention, not less.

SAINTS at York’s College of Benefactors Dinner

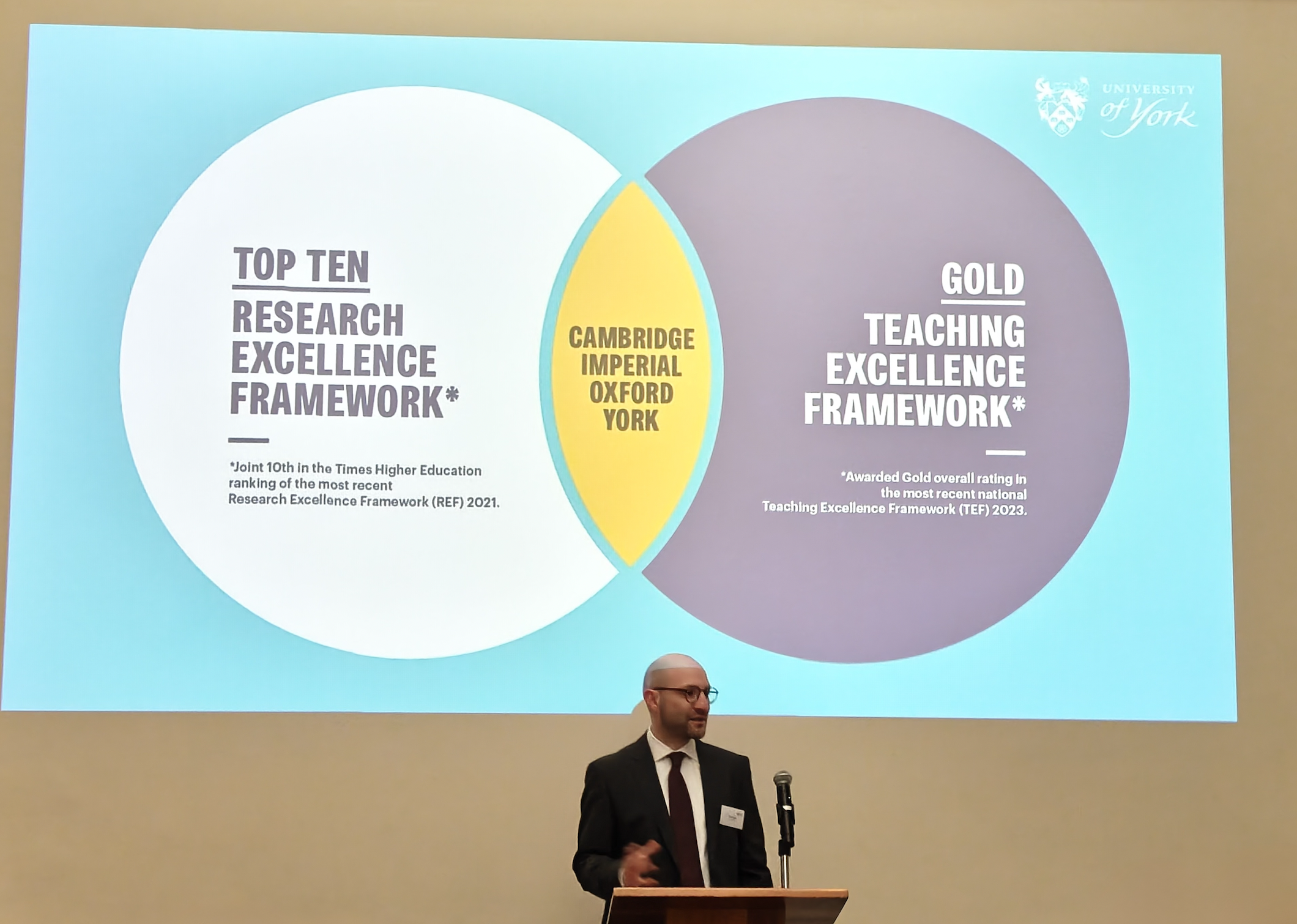

In late March, Ibrahim Habli (SAINTS Director) and PhD researchers Madeleine Neilsen and Prenika Anand attended the University of York’s College of Benefactors dinner at the Royal Society of Chemistry, organised by the Office of Philanthropic Partnerships and Alumni.

In their speeches they reflected on why the University of York, an institution dedicated to the public good with four decades of expertise in the safety of complex systems, is the ideal home for AI safety research. They described how SAINTS’ research and training across engineering, social, legal and ethical dimensions is rooted in the city’s ethos of social justice and ethical enterprise.

The team highlighted SAINTS’ core pillars: interdisciplinarity, collaboration and inclusive research and emphasised our commitment to advancing evidence‑based policy and practice so AI deployments are safe, acceptable and fair for the communities they serve.

SAINTS at the International Association of Safe and Ethical AI’s conference (IASEAI’26)

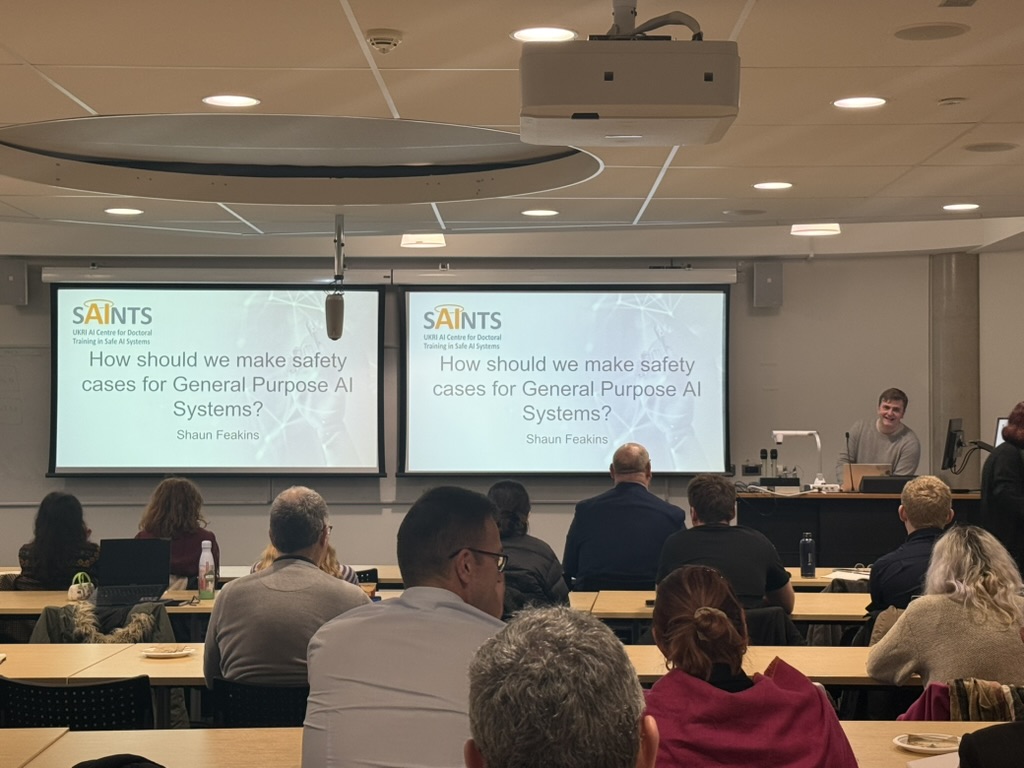

SAINTS PhD Researcher Shaun presented his paper on safety cases for frontier AI systems at the International Association of Safe and Ethical AI’s conference (IASEAI’26) last month. The invitation-only event brought together over 1300 attendees from industry, policy and academia. Plenary presenters include Yoshua Bengio, Geoffrey Hinton and Stuart Russell. The event was held at UNESCO House, Paris.

Shaun’s paper aimed to bring a much-needed safety-critical perspective to safety cases for increasingly advanced general-purpose AI systems. The paper focuses on recent proposals to make safety cases for general-purpose models themselves, building on earlier research into the distinction between safety cases for models at an “upstream” level, i.e. for the model itself, and safety cases in downstream, safety-critical contexts. The use of safety cases at an upstream level remains a relatively new idea.

Co-authored with his supervisors Ibrahim Habi and Phillip Morgan, the paper focused on the use of safety cases in safety-critical industries, the emerging ecosystem around safety cases for frontier AI systems – particularly those focused on alignment – and how developers and policymakers might integrate perspectives from safety assurance into frontier AI safety cases.

The paper brings together insights formed from deep interrogation into safety cases in safety-critical systems, aided by expertise within York’s faculty, the CfAA and SAINTS team. It applies this knowledge to novel challenges posed by LLMs. His paper is available to read online.

Reflections Post the AI Impact Summit

Prenika Anand, SAINTS second year researcher, reflects on her experience at the AI Impact Summit in Delhi, India in February 2026.

My PhD journey began with writing my proposal post the Bletchley Declaration in the UK, in many ways the beginning of key policy discussions on AI safety and caution. Three years later, I was privileged to be in India for the AI Impact Summit in February 2026 as a UKRI SAINTS CDT researcher. A succinct summary of walking through miles of exhibitions and attending 50+ pre summit and summit sessions at different venues will take me longer, but am hereby attempting to document my take on the key engagements I had the opportunity to attend. As one may expect, I point out some wins and some opportunities.

The summit did well on what it was already expected to; the scale. The visible grandeur of the event in terms of the size of the venue, the number of summit and fringe event panels each day (200+ over a week), the number of pavilions (13 country pavilions and 300+ tech players I might have witnessed overall) and the sheer number of options thereof the academic, industry and policy sessions was unprecedented if I compare it to the few AI summits I attended previously in the UK and at Geneva. It definitely was not without navigating long queues for security checks and road traffic congestions through the week! Along with the scale of the summit, it was great to see the launch of the Global AI Impact Commons, a collaborative platform designed to help discover, replicate and scale high-impact AI solutions across countries and sectors and attend panel discussions on open source AI.

Whilst I commend the breadth of discussions on scale and adoption of AI benefits, they significantly outnumbered the discussions and panels on AI harms, safety and socio technical risks. Yet to witness a declaration that advocates for safety as a non-negotiable (binding and not merely voluntary) principle, to ensure that the “Impact” we recognise also accompanies a proportionate consideration of systemic harms. Yet I recommend listening online to a great sociotechnical panel I attended, speakers including Professor Dame Wendy Hall; ” From Technical Safety to Societal Impact: Rethinking AI Governance” and recent relevant publications cited/launched during the summit, including The OECD.AI Index; the AI Incident Reporting Framework for India; and the RAND Global Risk Index for AI-enabled Biological Tools.

The Expo (exhibition) at the summit was organized into 10 thematic pavilions featuring over 300 exhibitors from 30+ countries including the largest ones hosted by the big tech. Most of the exhibition was structured around the key areas of AI transformation; viz. Social Good, Human Capital, Inclusion, Safe & Trusted AI, Science, Resilience/Innovation, and Democratizing Resources. It was exciting to visit the UK Pavilion that also celebrated the UKRI initiatives in AI including the CDTs. From the India AI stack it was exciting to learn about our sovereign models including Sarvam and Vachana, a multilingual speech-to-text model for 12+ Indian languages. Similarly, there were expert demonstrations to describe the developments in the indigenous compute layer and the data stack (AIKosh). I also found the social impact initiatives by Wadhwani AI and kiosks on AI mediated assisted housing in urban India to be relevant for my research.

The Expo (exhibition) at the summit was organized into 10 thematic pavilions featuring over 300 exhibitors from 30+ countries including the largest ones hosted by the big tech. Most of the exhibition was structured around the key areas of AI transformation; viz. Social Good, Human Capital, Inclusion, Safe & Trusted AI, Science, Resilience/Innovation, and Democratizing Resources. It was exciting to visit the UK Pavilion that also celebrated the UKRI initiatives in AI including the CDTs. From the India AI stack it was exciting to learn about our sovereign models including Sarvam and Vachana, a multilingual speech-to-text model for 12+ Indian languages. Similarly, there were expert demonstrations to describe the developments in the indigenous compute layer and the data stack (AIKosh). I also found the social impact initiatives by Wadhwani AI and kiosks on AI mediated assisted housing in urban India to be relevant for my research.

On February 18, I had the privilege of presenting my research at the Participatory AI Research & Practice Symposium (PAIRS). The symposium provided a dedicated space for deeper dialogue and networking among researchers committed to community involvement in AI. My research which focuses on AI safety, psychological harms, and the ageing demographic benefited from the feedback on my academic poster and cross-disciplinary discussions.

It was an invaluable forum for discovering synergies with international researchers. I’m particularly excited about this new research community I have joined in, which will be a great feedback loop for my work. I was also honoured to finally meet Dr. Susan Oman, whose work with the People’s Panel on AI, and Margaret Colling have been a significant contribution to The Silver LAIning A SAINTS podcast.

Lastly, I had the privilege to attend The UK AI Research Showcase and Reception hosted by the British High Commission in India at the High Commissioner Ms. Lindy Cameron CB OBE’s Residence. It was an invaluable opportunity to hear firsthand from a high-profile delegation, including Deputy Prime Minister David Lammy, Minister for AI and Online Safety Kanishka Narayan and former PM Rishi Sunak. The discussions offered insights into the UK’s strategy on AI, the UK’s leadership in AI safety initiatives, and the growing landscape of joint investments and strategic collaborations between India and the UK. At the same time we had announcements on the UKRI AI Research and Innovation Strategic Framework.

I also had the opportunity to attend the keynote by Amanda Brock, CEO of OpenUK, and speak with her personally about my research; and her feedback was incredibly kind and encouraging. Beyond the panels, I thoroughly enjoyed the UK AI Talks, where I pleasantly reconnected with my former tutor at Oxford, Andrew Soltan.

To conclude, the summit was an opportunity to proudly represent both my national and professional affiliations. Beyond the assurance of being at home, the summit provided me with an intense week of informing my view, meeting a community of researchers, understanding industry and policy narratives and reflecting on how the same are going to shape safety research. An international and a proportionate focus on safety during the forthcoming summits will allow us to expand the space and investment for global research in safety methodologies, a legitimate ask. This sentiment of mine, and as much of other researchers I met, concerns itself with the overall pro adoption, pro scale, yet not so pro-safety tone of the final declaration and the AI investment that the summit achieved.

SAINTS at the Safety-Critical Systems Symposium SSS’26

The Safety-Critical Systems Club (SCSC), a global professional network for sharing knowledge about safety-critical systems, organises the Safety-Critical Systems Symposium (SSS) every year. The symposium includes keynote presentations, invited talks, talks selected by abstract submission, an exhibition, a poster session, workshops and tutorials, an evening banquet, entertainment, “armchair chats,” and social events.

SAINTS CDT researchers Suemaiya Zaman, Shaun Feakins, and Paul Powers attended the 34th Safety-Critical Systems Symposium (SSS’26), held once again in York. The annual symposium brought together safety engineers, researchers, and industry practitioners to explore the latest developments in safety-critical systems engineering, assurance, and governance.

Across parallel streams, the programme covered emerging approaches to assurance, specialist workshops, technical papers, and keynote presentations, with a strong focus this year on the growing role of artificial intelligence and autonomy in safety-critical domains.

Keynote highlights included Professor Harold Thimbleby (Swansea University), who challenged the UK legal presumption that computer evidence is inherently reliable, and Professor Phil Koopman (Carnegie Mellon University), who delivered a compelling closing address on embodied AI safety. Koopman argued that effective AI safety requires literacy across system safety, cybersecurity, machine learning, and human factors, rather than deep expertise in just one area.

Several sessions explored the use of large language models (LLMs) in safety-critical contexts, including their potential role in safety concept development, patient safety governance in healthcare, and predictive accident prevention. Contributors consistently stressed the importance of structured workflows, human oversight, and meaningful evaluation criteria.

During the symposium, Shaun Feakins presented his paper on upstream versus downstream assurance for general-purpose AI systems in safety-critical settings, exploring how safety science must adapt to closed-source models, rapid capability shifts, and complex socio-technical deployment contexts.

Suemaiya also presented a “five-minute pitch” titled, “A Novel Hazard Analysis Method in Modular Element Development for Dynamic Systems of Systems.”

SSS’26 provided a valuable opportunity for SAINTS CDT researchers to engage with leading experts in safety-critical systems and to connect their doctoral research with the wider industrial and regulatory landscape shaping the safe development of increasingly autonomous systems.

First Quarterly Workshop of 2025/26

SAINTS were pleased to hold our first quarterly workshop for this academic year on Dec 16th, where we were able to welcome our second cohort of PGRs! The event provided an opportunity for our first cohort to deliver presentations and engage in Q&A sessions about their research. Talks covered a range of topics from presenting the results of experiments involving the private language of LLMs to exploring post-growth frameworks for safe and ethical AI.

Workshop participants included partners from the Lloyds Register Foundation, Cambridge Consultants and the Office for Product Safety and Standards (OPSS). In addition to questions from other PGRs and supervisors, our partners helped contribute to constructive discussions about the research being carried out in SAINTS and potential societal impacts.

SAINTS visit to Jaguar Land Rover

We spent Wednesday, December 10, 2025, learning about AI safety in the field of automotive. SAINTS visited the Jaguar Land Rover (JLR) site in Warwickshire, in a partner visit hosted by JLR Research. The aim was to learn about how our partner organisation is responding to rapid changes within the Automated Vehicles (AV) landscape across various areas of ML development.

JLR has been at the forefront of British automotive engineering for the last century. It was great to learn in depth about how engineers at JLR are responding to the complex regulatory, engineering and societal challenges posed by AV technologies. We were pleased to hear from a range of presenters across JLR, from ML engineers through to specialists in Functional Safety.

It wasn’t bad to see some prototypes and famous models in the JLR atrium, either!

The day gave rise to various conversations across AI ethics, governance and engineering, with plenty of reflections on the SAINTS CDT interdisciplinary training courses and new perspectives from engineers at JLR. With various new standards introduced recently to complement ISO26262, the day emphasised the need for close collaboration between industry, research teams and interdisciplinary viewpoints in order to reflect the changes we’ll see as AV technologies are increasingly deployed into the UK’s roads and beyond.

We’re looking forward to future events and collaborations with JLR in the future. As industry partners to the SAINTS CDT, we had a variety of interesting discussions and questions for follow up. We’re looking forward to welcoming the JLR Research team to future workshops and taking the day’s discussions towards action-oriented, practical research based in through-life responsible innovation.

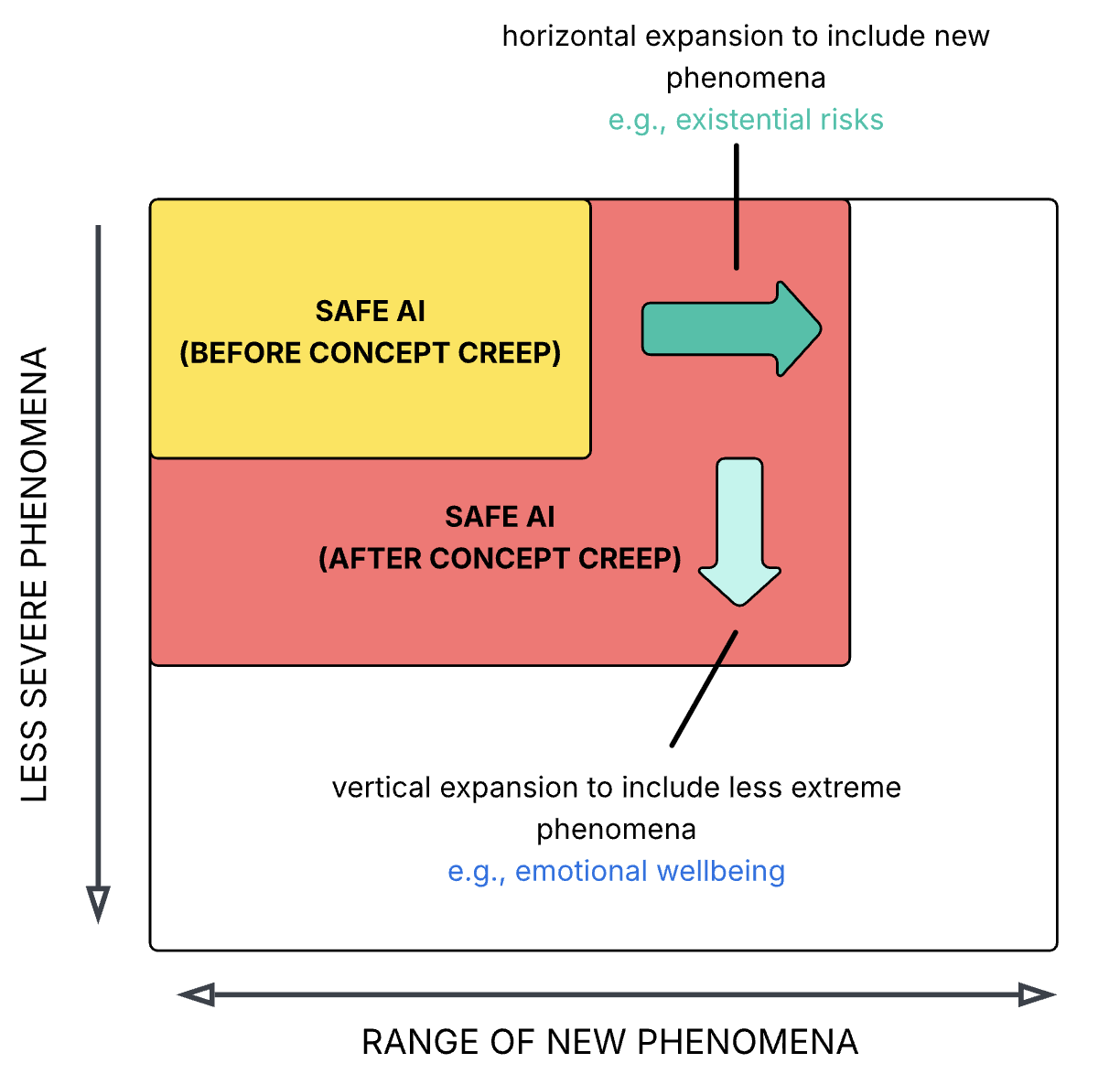

Concept Creep in Safe AI

This project, led by Dr Laura Fearnley, SAINTS Co-Research Lead, argues that the concept of “safety” in AI has undergone concept creep, a phenomenon which describes the gradual semantic expansion of harm-related concepts. First identified in psychology, concept creep describes how concepts broaden vertically to include less severe phenomena and horizontally to encompass qualitatively new ones. In this paper, Dr Laura Fearnley and Professor Ibrahim Habli, SAINTS Director, argue that safety in the context of AI has expanded along both dimensions.

The paper analysis contrasts a baseline definition of safety, grounded in traditional safety science, with contemporary uses of the term in AI discourse. It demonstrates that safety has crept horizontally to include phenomena such as systemic injustice and existential risks, and vertically to cover less severe concerns related to mental or emotional wellbeing. The primary aim of the work is to map the conceptual expansion of safety. The paper stops short of arguing whether this expansion constitutes progress or regress for the field, however, the authors suggest that some of the promising developments and the problematic trends recently witnessed within AI safety discourse can be understood, at least in part, as a consequence of concept creep.

SAINTS researcher Poppy Fynes attends the 5th International Conference on Advanced Railways and Transportation

Poppy Fynes and colleagues attended and submitted a paper on autonomous railways ‘Milestone Determination for Autonomous Railway Operation’ to the 5th International Conference on Advanced Railways and Transportation (ICART) 2025 in Korea (November 2025).

Poppy shared with us the following:

In our paper, we argue that autonomous railway systems cannot rely on generic computer vision datasets and instead require route-specific contextual modelling, which we address through the concept of milestones—key points along a route that trigger operational state transitions much like human drivers do.

We then test this idea using a large dataset generated in the OpenBVE simulator, comparing a standard Gaussian Naïve Bayes model with our OwO method (The Observed weight of an Output). OwO applies milestone-dependent weights, meaning that different features—such as speed limits, acceleration, or braking rate—are given more or less importance depending on the current state. This leads to stronger and more context-aware predictions.

Our results show that OwO improves performance by around 5–25% across most states, especially when the train is adjusting speed or braking. These findings support our argument that milestone-driven, context-rich modelling provides a more reliable foundation for autonomous rail. We finish by suggesting future work on visual milestone detection and reinforcement learning to make the system more adaptable in real-world scenarios.

The second episode of The Silver LAIning: A SAINTS Podcast is now live!

The second episode of The Silver LAIning: A SAINTS Podcast is now live! We were pleased to have Margaret Colling, a former librarian and one of the 11 members of the UK public, chosen to form the “People’s Panel on AI” in 2023. Through a facilitated deliberation process, the panel put forward recommendations for the government, industry, academia, and civil society.

Since her participation, Margaret has actively advocated for the public’s role in addressing the potential ethical and societal risks of AI. Listen in to learn more about her newly found purpose, advancing the conversation on the safety of AI.

oplus_142606368

Launch of a brand new SAINTS Podcast

Last week, SAINTS and Prenika Anand (a second year PhD Researcher), launched The Silver LAIning: A SAINTS Podcast.

Prenika is a SAINTS PhD Researcher exploring Psychological Safety of AI for Older Adults and is the creator and host behind the podcast.

On her motivation behind the series, Prenika says:

“I feel that at most academic and media forums, the intersection of ageing, AI and safety is under-discussed. One of my endeavors is to help bring this conversation to the forefront, even as I conduct my PhD research.”

As AI integrates into health and social care, the podcast dives into conversations with stakeholders to analyse the benefits and risks AI presents, particularly for older adults.

In the inaugural episode, Prenika sat down with Professor Dianne Willcocks, a socio gerontologist who has dedicated more than four decades to advocating for older people’s wellbeing. They discuss the adaptation of older people to digital technologies in general and the current perspectives.

Supported by the UKRI AI Centre for Doctoral Training in Safe Artificial Intelligence Systems (SAINTS), this multidisciplinary podcast series is intended to create accessible briefings for anyone interested in the intersections of ageing, AI and safety.

SAINTS at TAROS 2025

Between 20 and 22 August 2025, some of our SAINTS CDT postgraduate researchers attended the TAROS (Towards Autonomous Robotic Systems) conference, hosted at the University of York.

The UK-hosted international conference on Robotics and Autonomous Systems (RAS) aims to present and encourage discussion of the latest results and methods in autonomous robotics research and applications.

SAINTS’ Prenika Anand had the opportunity to present her work from her first year of studying a PhD at TAROS 2025. In a special session for Safety of Autonomous Systems chaired by Senior Research Fellow in Safety of Autonomy and AI, Philippa Ryan, she presented a talk and an academic poster on Psychological Safety of AI in Assisted Living. Prenika also won the D-RisQ Award for best poster on Safety for Autonomous Systems and Robotics.

Prenika said “To talk as a health professional to an auditorium full of roboticists was exciting! I equally enjoyed listening to talks by my PhD colleagues Shaun F., also a SAINTS PGR, and Nawshin Mannan Proma (Doctoral Researcher & Graduate Teaching Assistant, Institute for Safe Autonomy) and Rabia Karakaya (PhD researcher at the University of York, specializing in human robot interaction in autonomous mobile robots in public spaces.

The questions and supportive feedback from the audience, and winning a prize for the Best Poster in this category was just the motivation needed after months of synthesising evidence from literature.”